by Sandrine Rigaud • March 24, 2026

Editor’s Note: This is the second excerpt taken from GIJN’s in-depth report on “The Investigative Agenda for Technology and AI Journalism,” based on a day-long pre-conference event held on November 20 at GIJC25, where 100 investigative journalists, editors, tech experts, and researchers from nearly 50 countries and territories convened to examine the most urgent technology-related challenges and opportunities facing investigative journalism today. Credits and acknowledgments for this project can be found here.

Reporting on AI is often driven by hype and focused on technological novelty, rather than on the questions of power, accountability, and impact. Too frequently, tech and AI are treated as technical subjects, instead of being examined as a system of influence that reshapes institutions, labor, knowledge production, and democratic processes.

Covering AI requires examining the power structures and decisions that shape how these systems are built and deployed, and who ultimately benefits from them.

One of the most compelling frameworks discussed during the pre-conference event was proposed by Karen Hao, who urged journalists to place power at the center of AI reporting. Drawing on her experience covering the industry since 2018 and her book “Empire of AI,” Hao argued that comparing leading AI companies to historical empires offers a useful lens for investigative journalism. These companies operate on a global scale and at historically unprecedented levels of size, accruing extraordinary economic and political power in ways that often involve dispossessing others.

As she explained, AI companies lay claim to resources that are not their own: they train systems on vast amounts of data scraped from the internet, drawing on the intellectual property of writers, artists, journalists and creators without consent; they rely on large-scale, often invisible labor, for data annotation and content moderation for example, frequently performed in countries from the Global South, without a proportional share of economic value flowing back to those workers. Participants also highlighted how this concentration of resources (data, compute, talent, and capital) is increasingly confined to a small number of firms, reinforcing structural asymmetries that make scrutiny and regulation more difficult.

Debunking Industry Narratives

For investigative journalists, the implications are clear. Covering AI requires examining the power structures and decisions that shape how these systems are built and deployed, and who ultimately benefits from them. This also means questioning the dominant narratives promoted by the AI industry, which often serve to limit scrutiny. Building on the analytical framework developed in Hao’s work and the discussions throughout the day, participants identified four recurring narratives that investigative journalists should challenge.

First, AI is framed as inevitable, an unstoppable force beyond political control, obscuring the human and institutional choices that shape its development and deployment. Several speakers noted that this rhetoric of inevitability is frequently reinforced through geopolitical framings (particularly narratives of technological “competition” or “war”), which discourage critique and present rapid deployment as a strategic necessity rather than a political choice.

As investigative journalist Gabriel Geiger explained, algorithms have become integral to core state functions over the past two decades, from welfare distribution and law enforcement to healthcare and housing allocation.

Second, AI is presented as neutral and objective, despite evidence that automated systems reflect the values, biases, and data limitations of their creators.

Third, AI is portrayed as immaterial, through metaphors such as the “cloud,” masking its physical footprint, environmental costs, and reliance on hidden labor, particularly in the Global South. Participants emphasized the importance of investigating the material infrastructure underpinning AI systems (data centers, energy and water consumption, extractive supply chains) as well as the communities most affected by these infrastructures.

Finally, the myth of the “everything machine” suggests that a single system can solve complex social problems. These technologies are not neutral, and they “break down” as soon as they are taken out of their original context (San Francisco, the English language, or US culture) and confronted with other global realities.

From Algorithmic Power to Accountability Gaps

Beyond artificial intelligence itself, several speakers stressed the importance of focusing more broadly on algorithmic power. Algorithmic power refers to a relationship of power exercised through automated decision-making, already deeply embedded in public-sector and private companies’ decision-making processes.

As investigative journalist Gabriel Geiger (Lighthouse Reports) explained, algorithms have become integral to core state functions over the past two decades, from welfare distribution and law enforcement to healthcare and housing allocation. This dynamic, for example, was documented in a joint investigation published by Lighthouse Reports, Wired, the Pulitzer Center, and others, which examined how automated fraud-detection systems were deployed across European welfare states. The reporting showed how opaque risk-scoring algorithms systematically flagged claimants as suspicious, leading to suspensions of benefits or investigations with little explanation, limited avenues for appeal, and disproportionate impacts on vulnerable populations. In many of these cases, individuals only became aware of the existence of an automated system after experiencing abrupt, life-altering consequences.

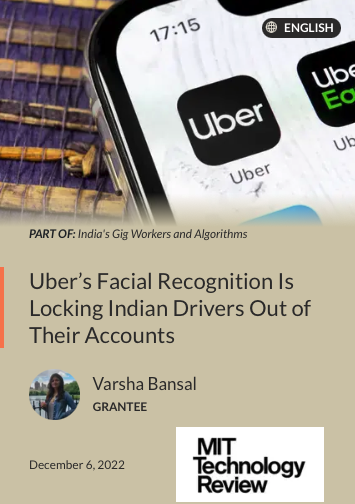

Algorithmic power is equally visible in the private sector, particularly through labor platforms. These systems often operate without clear explanations, leaving workers unable to contest decisions or even understand why they have been penalized. An investigation by Varsha Bansal supported by the Pulitzer Center documented how Uber’s facial recognition system in India automatically locked drivers out of their accounts, cutting off access to income based on opaque algorithmic decisions, with no clear explanation or effective appeal mechanism. Speakers highlighted how this opacity contributes to new forms of labor control and precarity, while shielding companies from accountability.

In both public and private contexts, the concentration of algorithmic power creates profound accountability gaps, especially when systems are shielded by claims of technical complexity or commercial secrecy.

Investigating the ‘Black Box’: Methods, Limits, and Power Imbalances

A recurring problem raised by participants was the “black box” challenge. Journalists are often confronted with systems whose inner workings are partially or entirely hidden, whether because of technical complexity, claims of trade secrecy by private companies, or institutional opacity within public administrations. As a result, reporters frequently have only a fragmented view of how automated systems function and how decisions are actually made.

Speakers emphasized that the black box is not an insurmountable obstacle. Investigative strategies can be adapted to work around limited access to source code.

In public sector cases, participants recommended using freedom of information laws in a layered and strategic way. Rather than immediately requesting complex datasets or source code, journalists can begin with foundational documents, such as procurement contracts, impact assessments, or user manuals, before moving toward more technical materials. Human sources can also provide reporters with internal training materials, presentations, and guidelines. These documents often reveal the assumptions, thresholds, and decision rules embedded in the technology, even when the code itself remains inaccessible.

When direct access to technical documentation is impossible, especially in the private sector, journalists can focus on analyzing the outputs of algorithmic systems. Examining real-world outcomes can make it possible to infer how a system operates and where its biases or failures lie. Investigations such as the one conducted by Rest of World cited during the GIJC25, relied on analyzing the outputs produced by algorithms themselves to demonstrate how these systems generate and reinforce bias. A Bloomberg investigation into Uber and Lyft in New York City relied on crowdsourced data from drivers to document pay restrictions and platform lockouts. By aggregating workers’ experiences and records, Leon Yin and the other journalists who authored the article were able to reveal patterns of algorithmic control shaping access to work and income, without access to the underlying code.

Aggressive legal tactics, including threats of litigation, non-disclosure agreements, and pressure on sources, were identified as common obstacles for journalists.

Another effective approach suggested involves encouraging users to export and share their own data. Tools such as Google Takeout or TikTok data exports generate standardized datasets that include metadata not visible through public interfaces. When analyzed at scale, these datasets allow reporters to statistically examine algorithmic behavior and decision patterns. Scraping publicly available data can further complement these methods, enabling journalists to reconstruct how automated systems operate in practice.

Collaborative investigations such as the Big Tech’s Invisible Hand — led by the Centro Latinoamericano de Investigación Periodística (El CLIP), together with Agência Pública (Brazil), and 15 other media partners — also show that AI accountability requires examining not only technologies, but the aggressive lobbying strategies of Big Tech companies. These investigations documented the scale of resources deployed, the tactics used, and the global reach of corporate influence aimed at shaping regulation and public debate around AI and digital technologies.

Pre-conference attendees emphasized that investigating AI and Big Tech companies involves a profound power imbalance between journalists and the actors they seek to hold accountable. This concentration of power creates heightened legal and digital security risks for journalists. Aggressive legal tactics, including threats of litigation, non-disclosure agreements, and pressure on sources, were identified as common obstacles for journalists. Several speakers called for greater collaboration across newsrooms, civil society, and academia to mitigate these risks and rebalance power.

Priorities Identified:

- Recenter AI reporting on power and accountability, moving beyond hype and technical novelty.

- Challenge dominant industry narratives portraying AI as inevitable, neutral, immaterial, and universal.

- Develop and share investigative methods to overcome the “black box”, including FOIA strategies, analysis of system outputs, and crowdsourcing.

- Investigate lobbying and influence strategies of Big Tech, recognizing corporate political power as a central dimension of AI accountability.

- Acknowledge and mitigate power imbalances and risks for journalists, including legal pressure and digital security threats.

- Strengthen collaboration and coordination across borders, recognizing that AI systems and the companies behind them operate transnationally.

Sandrine Rigaud is the program director of GIJN. She is an investigative journalist, director, and Emmy-winning producer who served as editor-in-chief of Forbidden Stories from 2019 to 2024. In that position, she led international collaborations to continue the work of assassinated or under threat reporters, coordinating investigations involving up to 100 journalists and 30 media outlets, including Le Monde, The Washington Post, The Guardian, Der Spiegel, Haaretz, and El País. She teaches investigative journalism at the School of Journalism of Sciences Po Paris and is co-author of “Pegasus: How a Spy in Your Pocket Threatens the End of Privacy, Dignity, and Democracy.” A Nieman Fellow at Harvard in 2024/2025, she worked on global investigative collaborations, leaked data management, and Artificial Intelligence.

This article was originally published on GIJN.